Six.

After a really helpful meeting before last class, I’ve finally grounded my thoughts for this project into a concrete idea. I’ve been playing around with musicalgorithms to figure out how exactly to get layered data to sound good together, which is a little difficult. Most of the time, it just makes an awful mess of sound. So, what I’m thinking I might do is plug in the raw data and work with the outcomes to create a more ‘curated’ version of the generated melodies.

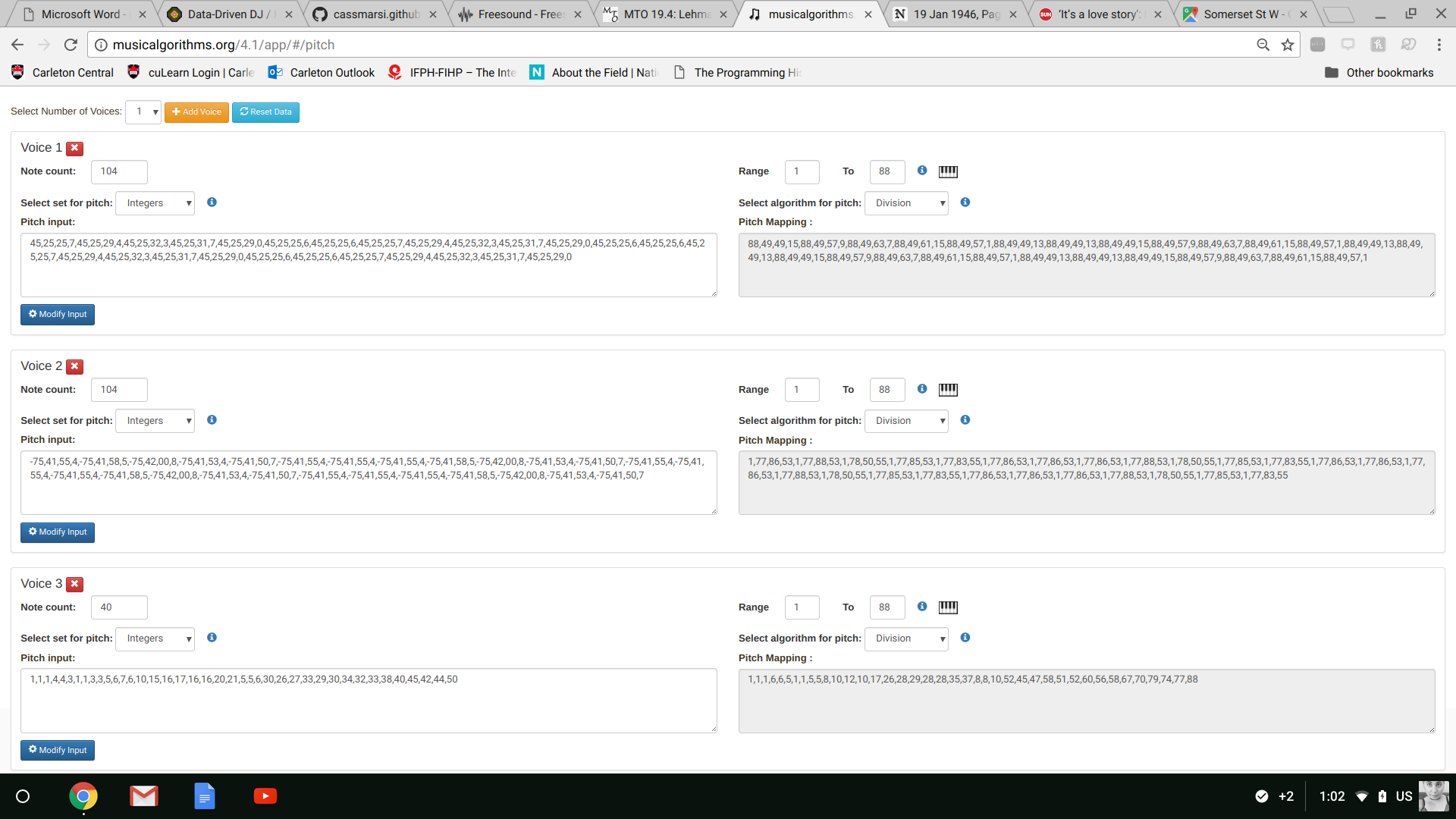

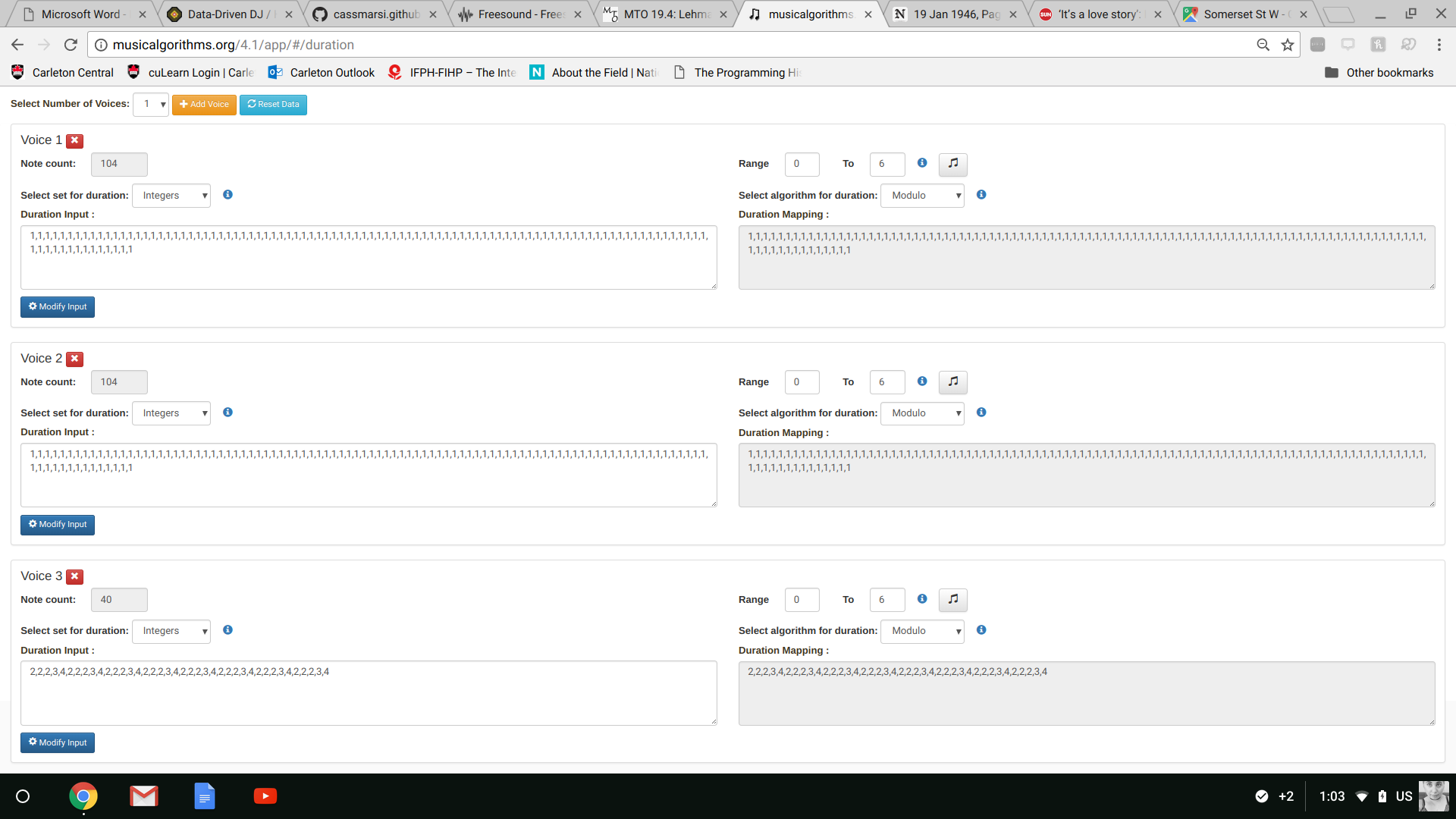

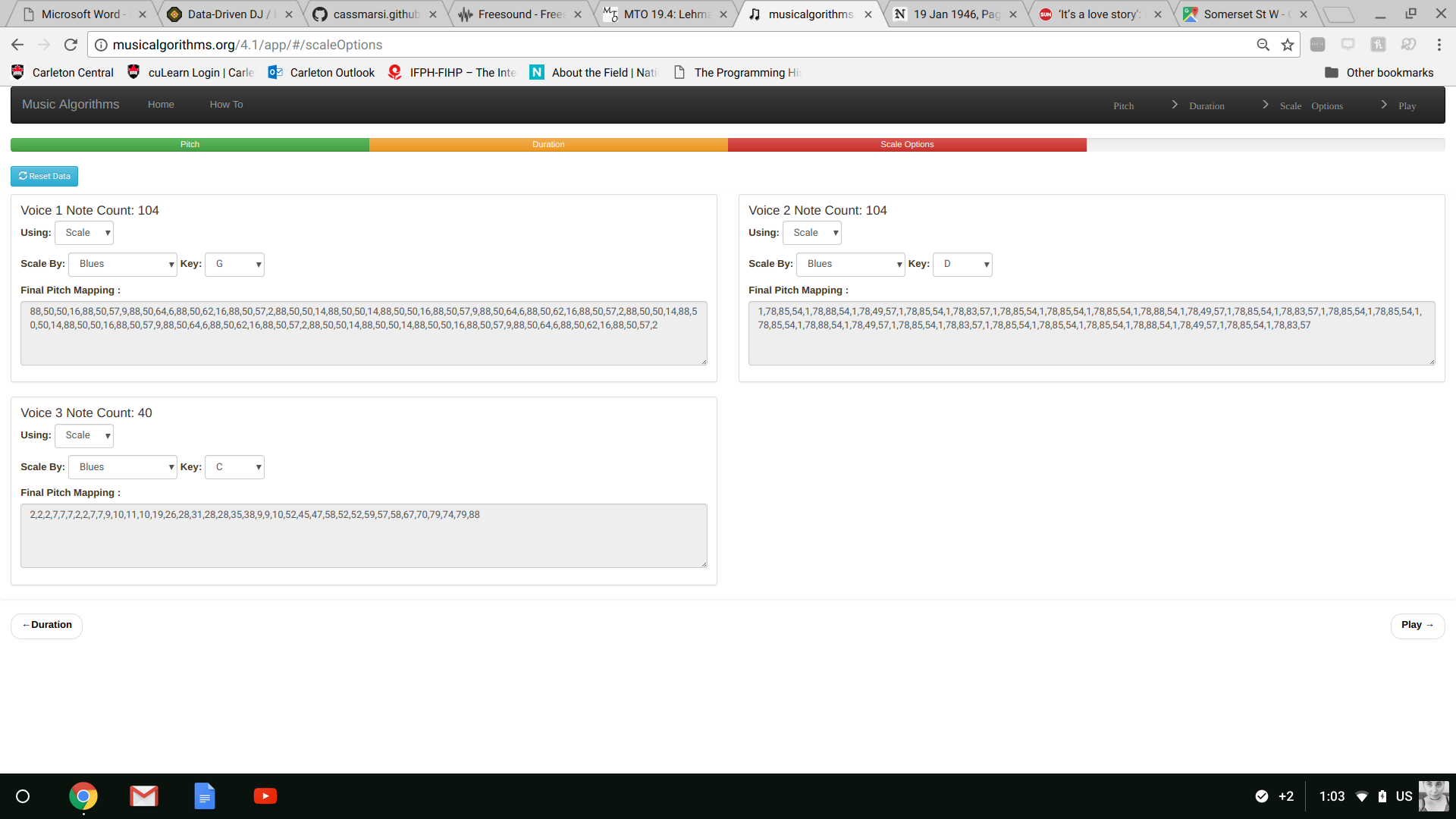

Here are some screenshots of the data I entered in each different step (pitch, duration, and scale for each note). The data I entered are latitude and longitude points for various spots around parliament hill.

This is where I was at last week:

- thinking about sonification (musicalgorithms; parameter mapping; emotion and gender as concepts to explore through sound; codifying emotional scale; coding through soundtracks)

- how to use e-textiles effectively

- mapping: maybe this could be the textile component

- embodied experience: following a map that you’re actually holding vs. having to use an app the whole time

what’s the overlap?

That’s the question - finding where and how I can have these interests overlap.

So what about a historical film walkthrough? People could use a “dumb” smart textile (for example, a stylized map, with a path through it), but this map leads to Aris through QR code (just start and finish with hidden waypoints that trigger audio) or some other app/website/digital archive. In this way, they would be following a sort of ‘treasure map,’ which they’ll be holding in their hands, and the digital output (sonification), will be happening simultaneously without having to always be looking at their phone. This is important for me because I want the project to feel like it’s happening in the space, and not on the screen.

- Questions of materiality/history/digital

The only thing left is finding the story, or stories, I want to tell through this map.